AI for Law Firms: What Actually Works in Production (Beyond the Demos)

Last updated on November 24, 2025

AI adoption is accelerating across industries at an unprecedented pace. However, most implementations remain in pilot stages. The gap between experimentation and production deployment in legal AI stems from unique challenges that don’t exist in other sectors. Malpractice liability, attorney-client privilege, regulatory compliance frameworks, and the need for absolute accuracy create barriers that impressive demos often sidestep.

This creates a critical question for law firm leaders and legal tech founders: which AI capabilities remain too immature for deployment despite compelling demonstrations?

Understanding this distinction matters because the cost of getting AI implementation wrong extends beyond wasted budget. Deploying immature AI capabilities creates malpractice exposure, regulatory violations, and client trust erosion that can take years to repair. This article examines what actually works in production legal AI today, what’s still evolving, and the infrastructure requirements that separate successful deployments from expensive failures.

Legal AI Use Cases That Work in Production Today

While most legal AI remains stuck in pilot programs, three capabilities have proven themselves under real production conditions.

AI-Powered Intake Automation for Law Firms

Client intake creates a brutal economics problem for law firms. Every missed call is lost revenue. Every incomplete intake form means follow-up work. Every unqualified lead that reaches an attorney wastes billable time. Before AI, the options were expensive: hire more staff or risky let calls go to voicemail.

Casegen tackled this for personal injury law firms facing that exact bottleneck. Thanks to their experience, Casegen provided clear requirements for how a structured intake should function in practice. With that input, Softcery built an AI voice agent for the company that handles structured legal intake with the thoroughness and reliability of an experienced paralegal. The agent asks the right questions in the right order, adapts when clients give unexpected information, and captures every critical detail without forgetting steps or rushing through important context.

The system collects client contact information, case type, injury details, involved parties, and qualifying criteria. More importantly, AI legal system knows when to probe deeper and when to move forward. When client mentions a pre-existing condition, AI agent explores how it relates to the current injury. If someone describes an accident involving multiple vehicles, the agent systematically captures each party’s information without missing details.

But production-ready intake automation extends beyond the voice conversation. Casegen’s AI system includes rule-based routing that transfers calls to human staff when specific criteria are met (for example, when the case falls outside standard parameters or requires attorney judgment). Automated notifications alert attorneys immediately with structured case summaries. AI legal system supports multiple agent types: inbound agents handle incoming calls 24/7, outbound agents contact prospects during business hours, and medical agents (in development) follow up with healthcare providers for supporting documentation.

The Zapier integration enables bi-directional workflow automation with existing legal technology stacks. Law firms trigger outbound calls automatically from case management events—when a new lead enters Clio, when a medical record request deadline approaches, or when follow-up timing criteria are met. Call outcomes flow back through Zapier to update CRM systems, create tasks, and trigger document generation, eliminating manual data transfer.

Observability infrastructure built on Langfuse provides continuous quality monitoring across every interaction. The system tracks conversation quality metrics, agent response accuracy, question coverage completeness, and client satisfaction indicators. Attorneys review aggregated performance data showing where AI agents excel and where human intervention patterns emerge. When evaluation scores drop below thresholds, the system flags conversations for manual review, enabling rapid issue identification before they impact client experience at scale.

Firm-specific prompt customization addresses the reality that every law firm practices differently. Casegen develops baseline outbound prompts tailored to each firm’s practice areas, jurisdictional requirements, and case qualification criteria. A medical malpractice firm in California operates with fundamentally different intake protocols than a workers’ compensation practice in Texas. On top of these baseline prompts, flexible question selection gives attorneys control over individual call scenarios. Each firm maintains a company-wide library of custom questions. When initiating an outbound call, attorneys select which questions the AI should ask and provide case-specific context through a dedicated input field. If an attorney knows the prospect was rear-ended at a specific intersection last Tuesday, that context travels with the call, transforming generic automation into informed, case-specific communication.

Law firms using the system see concrete results. Attorneys reclaim hours previously spent on initial screening. Zero leads slip through because of timing or call volume. The time from initial contact to attorney review drops significantly because every intake arrives structured and complete. Quality stays consistent across every conversation. And firms handle increased call volume without proportional staffing costs.

Legal AI Document Analysis and Knowledge Retrieval

Law firms and compliance consultancies maintain massive document repositories: everything from legislation and case law to internal policies and client-specific materials. The challenge isn’t reading the information, it’s giving teams and clients a way to reliably find the right material at the right moment.

TCC (The Compliance Company Limited), a leading consultancy providing governance reviews, external assurance work, AML/CFT audits, and regulatory compliance guidance for boards and regulated entities across New Zealand and Australia, faced this exact challenge. The traditional expert-led model made it difficult to provide instant responses to routine questions or serve more clients efficiently. With large collections of policies, regulations, client materials, and training content, TCC needed a way for clients to access accurate, traceable answers without always relying on a consultant.

Softcery built UpSkill AI, a document analysis platform using retrieval-augmented generation (RAG) architecture specifically designed for compliance-critical contexts. AI system performs semantic search across all compliance documentation, finding relevant information even when queries are vague or incomplete. It handles follow-up questions using conversation context, understanding that “what about contractors?” relates to the previous question about workplace safety obligations.

The technical architecture addresses compliance-grade accuracy requirements. Every answer includes inline citations with direct links to source documents. The system maintains separate knowledge bases for different jurisdictions (New Zealand and Australia), ensuring that Australian regulations don’t contaminate New Zealand compliance advice. Category-aware retrieval prioritizes Acts over guidance documents, matching the hierarchy legal professionals expect.

Relevance filtering detects when questions fall outside the system’s knowledge domain and says so clearly rather than generating plausible-sounding nonsense. Context-aware query rewriting reformulates follow-up questions to include necessary context from the conversation. A validation layer checks each generated answer for proper structure, grounding in source material, and coverage of the question asked.

Organization-level data isolation ensures client confidentiality. Firm A’s documents stay completely separate from Firm B’s, with no shared embeddings or cross-client retrieval. The administrative dashboard lets compliance experts review conversations, track questions the system couldn’t answer, and manage document folders.

The business impact is straightforward. Knowledge access bottlenecks that previously consumed hours now resolve in seconds. Compliance professionals spend time providing expert judgment rather than searching for basic information. The firm scales advisory services without proportionally scaling headcount. And the quality stays consistent because every answer links back to verified source material rather than providing basic information that could be easily answered by AI.

AI Legal System for Compliance Q&A

Compliance Q&A sits at the intersection of document analysis and conversational AI. Businesses operating in regulated industries need instant answers to compliance questions, but those answers must be accurate, traceable, and jurisdiction-specific. Generic AI responses won’t work.

The UpSkill AI implementation demonstrates production-ready compliance Q&A. The system doesn’t just retrieve relevant documents. It synthesizes information across multiple sources, handles ambiguous questions, and provides structured answers with evidence chains.

Multi-jurisdiction support matters critically in compliance contexts. The system maintains separate knowledge bases with jurisdiction-aware retrieval, ensuring Australian content doesn’t leak into New Zealand advice.

The validation architecture catches common AI failures before they reach users. If the system generates an answer without sufficient grounding in source documents, validation fails and the system either regenerates with stricter retrieval or explicitly states insufficient information. This prevents the most dangerous failure mode: confidently wrong answers.

Production deployment reveals requirements that demos skip. The system needs role-based access control (admin, expert, lite user roles) to match how the consultancy actually operates. Clean architecture with separated concerns enables independent scaling as usage grows. Integration with existing authentication systems (they use Clerk) maintains security without creating separate login workflows.

AI for Law Firms: What’s Still Experimental

Not every impressive demo translates to production reliability.

Legal AI for Full Case Analysis: The Context Problem

AI systems can summarize individual documents effectively. They struggle with holistic case analysis requiring understanding of relationships across hundreds of documents, timeline reconstruction, identification of contradictions, and legal judgment about what matters.

The technical problem is context length and reasoning depth. Even with large context windows (100k+ tokens), AI models lose track of details across long documents. They miss subtle contradictions between a deposition taken in month three and an email from month one. They can’t reliably distinguish material facts from background noise.

More fundamentally, full case analysis requires legal judgment that current AI systems lack. An experienced attorney reviews discovery documents and identifies the three pieces of evidence that actually matter for summary judgment. AI systems treat everything with equal weight or apply statistical relevance that doesn’t match legal significance.

AI for Law Firms Legal Strategy: The Judgment Gap

Strategy development demands understanding of opposing counsel’s likely moves, judge-specific tendencies, client risk tolerance, and cost-benefit analysis of different legal paths. These require human judgment grounded in experience, relationships, and contextual factors that don’t appear in training data.

AI systems can surface relevant precedents and analyze possible arguments. They can’t tell you which strategy will actually work with this specific judge, in this jurisdiction, against this opposing counsel. The demo shows impressive legal research. Production reveals that research without judgment creates useless output.

Legal AI Courtroom Prediction: The Data Problem

Predictive systems claiming to forecast case outcomes face insurmountable data quality problems. Court records contain outcomes but rarely capture the full reasoning, evidence quality, attorney skill, or judge-specific factors that drove decisions. Training on outcomes without understanding causes creates models that find spurious correlations.

Even if data quality improved, the stakes make predictions dangerous. A system predicting 70% win probability might be accurate in aggregate across 1,000 cases. For the specific client whose case falls in the 30%, the prediction was catastrophically wrong. Law firms face malpractice risk when clients rely on AI predictions that don’t pan out.

Production Requirements AI Legal Systems Need

The gap between demo and production is also about infrastructure, compliance, and edge case handling that don’t appear in controlled testing environments.

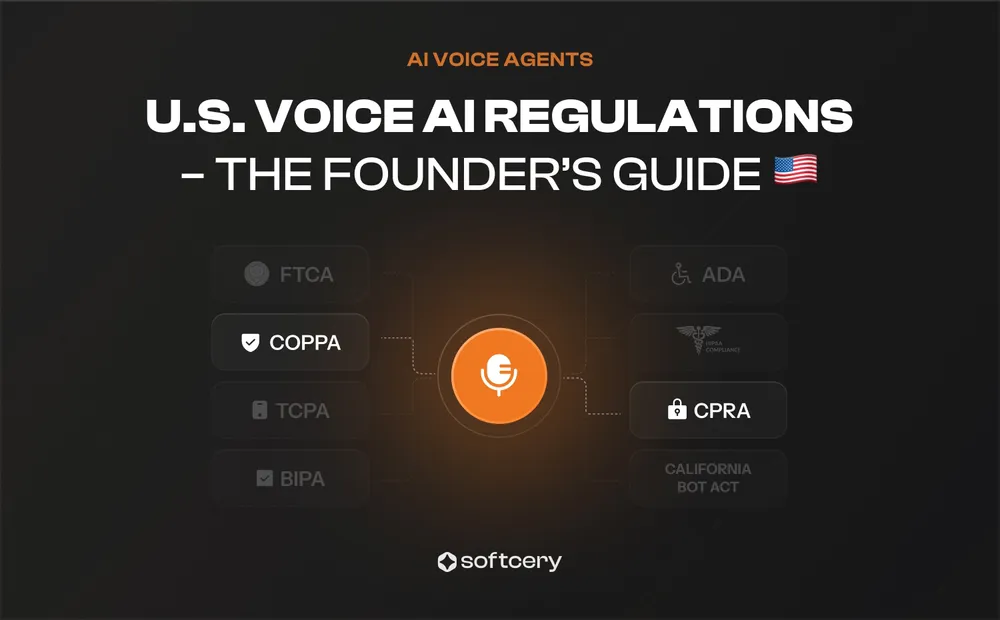

Compliance and Audit RequirementsProduction legal AI must satisfy bar association guidelines, which vary by jurisdiction and evolve faster than software development cycles. The ABA’s Formal Opinion 512 requires lawyers to understand how AI systems work, prevent confidential information disclosure, review AI output for accuracy, and disclose AI usage to clients. State bar associations layer additional requirements on top.

Audit trails become critical. Every AI interaction needs logging with timestamps, users, data accessed, sources cited, and confidence scores. Discovery requests may require producing this information. System architecture must support compliance from day one, not retrofitted later. Building production-ready AI for law firms means treating observability and audit capabilities as core requirements, not operational afterthoughts.

Accuracy and Verification SystemsLegal AI systems must achieve accuracy levels that other industries consider unnecessary. A 95% accuracy rate sounds impressive until the realization that 5% of client-facing answers contain errors creating malpractice exposure. Production systems need accuracy above 98%, with verification layers catching failures before they reach clients.

- Source attribution ensures every claim links to a specific document; 2. Citation verification confirms that cited cases actually exist and contain the quoted text; 3. Confidence scoring flags uncertain answers for human review;

- Grounding checks verify that generated text actually reflects source material rather than model hallucination.

Demos use clean data. Production encounters scanned documents with poor OCR quality, handwritten notes, multi-language content, corrupted files, and documents in formats the system wasn’t trained on. Edge case handling determines whether the system fails gracefully or creates downstream problems.

Legal production AI systems need explicit error handling for each failure mode. When OCR quality is insufficient, the system routes to manual review rather than analyzing garbled text. When confidence scores drop below thresholds, human escalation triggers automatically. When questions fall outside the system’s knowledge domain, it states that clearly.

Integration and Data Pipeline ComplexityLegal AI doesn’t operate in isolation. It needs integration with case management systems (Clio, MyCase, custom platforms), document management (NetDocuments, iManage, SharePoint), billing systems for time tracking, research databases (Westlaw, LexisNexis), and client communication platforms. Each integration adds complexity, potential failure points, and maintenance burden.

Data pipelines require ongoing attention. Legal information changes constantly as statutes get amended, case law evolves, and regulations update. Production systems need processes for updating knowledge bases, refreshing embeddings, validating that changes didn’t break existing functionality, and tracking what version of information the system used for past answers.

How to Choose the Right Legal AI Use Case for Your Firm

Understanding which AI capabilities work in production doesn’t automatically reveal which one fits a particular firm. The right starting point depends on current pain points, available resources, and organizational readiness. This framework helps law firms evaluate their situation and select the AI use case with the highest probability of successful deployment.

AI for Law Firms Readiness Assessment

Before committing budget to any legal AI implementation, evaluate your firm across four dimensions: operational pain points, technical infrastructure, resource availability, and risk tolerance.

Operational Pain Points AnalysisMap the firm’s biggest bottlenecks to AI capabilities that address them directly. If missed client calls cost cases monthly, intake automation delivers immediate ROI. Calculate lost revenue from after-hours inquiries and qualification time attorneys spend on low-value leads. For firms losing 20+ potential clients monthly to timing issues, intake automation pays for itself within six months.

If attorneys spend hours searching for precedents, regulations, or internal knowledge, document analysis targets the right problem. Track how much time legal professionals spend finding information versus applying it. When search time exceeds 30% of research work, document analysis becomes economically justified.

For compliance-focused practices drowning in client questions about regulatory requirements, compliance Q&A systems scale expertise without scaling headcount. Measure how many routine compliance questions your team handles monthly and estimate time per response. High-volume, repetitive inquiry patterns signal readiness for automation.

Technical Infrastructure EvaluationYour existing technology stack determines integration complexity and deployment timeline. Firms using mainstream platforms (Clio, MyCase, NetDocuments) deploy faster because integration patterns are proven. Custom or legacy systems add 2-4 months to timelines and increase development costs by 40-60%.

Assess your current authentication systems, data storage architecture, and API availability. Modern cloud-based systems integrate smoothly. On-premise infrastructure with limited API access creates significant technical challenges that may require infrastructure upgrades before AI deployment makes sense.

Resource Requirements by Use CaseIf you need an experienced partner to discuss legal AI implementation, reach out to Softcery at hey@softcery.com or book a call.

Different AI implementations demand different resource commitments. Intake automation requires the lowest ongoing maintenance. Once deployed, the system runs largely autonomously with monthly review of conversation quality and quarterly prompt refinement. Budget 5-10 hours monthly for oversight and optimization.

Document analysis demands more continuous attention. Legal information updates constantly. Plan for weekly knowledge base updates, monthly accuracy audits, and quarterly system retraining. Allocate 20-30 hours monthly for knowledge management and quality assurance.

Compliance Q&A sits between these extremes, requiring weekly regulatory updates and bi-weekly accuracy validation. Budget 15-20 hours monthly for maintenance and content updates.

All three require initial investments: 3-4 months for intake automation (USD 150K-300K), 4-6 months for document analysis (USD 200K-400K), and 4-5 months for compliance Q&A (USD 175K-350K). These ranges assume standard integrations. Complex requirements increase timelines and costs proportionally.

Legal AI Implementation Success Metrics

Define success criteria before deployment, not after. Different use cases require different measurement approaches.

- For intake automation, track lead conversion rates (target: 15-25% improvement), after-hours capture rates (target: 80%+ of previously missed opportunities), time from initial contact to attorney review (target: 60%+ reduction), and attorney time spent on initial screening (target: 70%+ reduction). Monitor these weekly during the first quarter, then monthly.

- Document analysis success shows in search time reduction (target: 80%+ faster), answer accuracy (target: 98%+), attorney satisfaction scores (target: 8/10 or higher), and reduction in duplicate research efforts. Track accuracy continuously, other metrics monthly.

- Compliance Q&A performance requires answer accuracy (target: 98%+), response completeness (target: 90%+ of questions answered without escalation), jurisdiction-specific accuracy (target: 99%+ correct jurisdiction matching), and client satisfaction. Review these metrics bi-weekly initially, then monthly after stabilization.

All implementations should track system uptime (target: 99.5%+), average response latency (target: under 3 seconds), and cost per interaction. These operational metrics reveal whether the system meets production requirements.

ROI Calculation Framework for Legal AI

Calculate expected ROI before committing to implementation. The formula differs by use case but follows a consistent pattern: quantified benefits minus total costs, divided by total costs, expressed as a percentage.

- For intake automation: Calculate current missed opportunity cost (number of missed leads monthly × average case value × conversion rate). Add time saved by attorneys on screening (hours monthly × billable rate). Subtract implementation costs (initial development + monthly infrastructure + maintenance time).

- For document analysis: Quantify time saved on research (hours monthly × billable rate) plus quality improvements reducing rework (estimated hours × billable rate). Subtract implementation and maintenance costs.

- For compliance Q&A: Calculate professional time saved answering routine questions (hours monthly × billable rate) plus capacity gained for higher-value work (additional billable hours × rate).

Conclusion

The legal AI landscape currently shows varying levels of production readiness across different capabilities. Three have proven themselves reliable in production: intake automation, document analysis, and compliance Q&A. More complex capabilities like full case analysis, legal strategy generation, and courtroom prediction show promise but need additional development before they’re ready for unsupervised deployment.

Law firms entering the legal AI space benefit from prioritizing proven capabilities with appropriate production infrastructure. While emerging technologies show promise in demonstrations, production deployment demands robust error handling, compliance frameworks, and quality assurance processes.Starting with mature capabilities delivers measurable efficiency gains while building the foundation for more advanced implementations.

The right starting point depends on the firm’s biggest bottleneck: intake automation for firms losing leads, document analysis when research slows the team down, or compliance Q&A if regulatory questions consume professional time.

Frequently Asked Questions

Three legal AI capabilities have proven production-readiness: intake automation, document analysis, and compliance Q&A. Intake automation handles client screening, qualification, and information collection 24/7 with structured output for attorney review. Document analysis performs semantic search across legal documents, regulations, and case law with citation tracking and jurisdiction-specific retrieval. Compliance Q&A synthesizes information from multiple sources to answer regulatory questions with full traceability. These work because they operate within defined boundaries, maintain high accuracy through validation layers, and include proper audit infrastructure. Full case analysis, legal strategy development, and courtroom prediction remain experimental because they require legal judgment that current AI systems lack.

Intake automation succeeds in production because failure modes are manageable and outputs receive human review before client engagement. The AI collects structured information (contact details, case type, injury specifics, involved parties) that attorneys validate before accepting cases. This creates a safety layer preventing errors from reaching clients. The task is also well-defined with clear success criteria and doesn’t require complex legal judgment. Systems like Casegen demonstrate that intake automation handles messy real-world conversations, adapts to unexpected information, and routes to humans when needed. Most importantly, the ROI is immediate and measurable through lead capture rates, after-hours conversions, and attorney time savings.

Legal AI document analysis uses retrieval-augmented generation (RAG) architecture to understand context, not just match keywords. When you ask about contractor safety obligations, the system doesn’t just find documents mentioning contractors. It retrieves Acts, regulations, guidance documents, and internal policies, then synthesizes a coherent answer showing where each piece of information comes from. Production systems like UpSkill AI include jurisdiction-aware retrieval ensuring Australian regulations don’t contaminate New Zealand advice, relevance filtering that admits when questions fall outside knowledge boundaries, validation layers checking answer grounding, and organization-level data isolation maintaining client confidentiality. Regular search returns documents. Production legal AI document analysis returns verified answers with evidence chains.

Full case analysis fails in production for three reasons. First, context limitations mean AI models lose track of details across hundreds of discovery documents even with large context windows (100k+ tokens). They miss contradictions between a deposition from month three and an email from month one. Second, the systems can’t distinguish material facts from background noise the way experienced attorneys do. They apply statistical relevance that doesn’t match legal significance. Third, and most critically, case analysis requires legal judgment about what evidence actually matters for summary judgment or settlement negotiations. AI systems treat everything with equal weight, lacking the strategic understanding that separates important evidence from irrelevant details. Until these problems are solved, full case analysis remains too unreliable for production legal work.

Assess readiness across four dimensions. First, operational pain points: if you lose 20+ potential clients monthly to timing issues, intake automation delivers immediate value. If attorneys spend over 30% of research time searching rather than analyzing, document analysis makes sense. Second, technical infrastructure: firms using mainstream platforms (Clio, MyCase, NetDocuments) deploy faster. Legacy systems add 2-4 months to timelines. Third, resource availability: can you commit 5-10 hours monthly for intake automation maintenance or 20-30 hours for document analysis? Fourth, risk tolerance: intake automation has manageable failure modes because attorneys review outputs before engagement. Document analysis and compliance Q&A need higher risk tolerance because some outputs may reach clients directly through validation layers.

Maintenance requirements vary significantly by use case. Intake automation needs the least ongoing attention: 5-10 hours monthly reviewing conversation quality, updating prompts quarterly, and monitoring lead conversion metrics. Document analysis demands 20-30 hours monthly for weekly knowledge base updates as legal information changes, monthly accuracy audits, quarterly system retraining, and continuous tracking of retrieval performance. Compliance Q&A sits in between at 15-20 hours monthly for weekly regulatory updates, bi-weekly accuracy validation, and jurisdiction-specific content management. All systems require monitoring of operational metrics (uptime, latency, error rates), compliance audits as regulations evolve, and staff training as capabilities expand. Firms that budget only for initial implementation without accounting for ongoing maintenance costs face system degradation and compliance gaps.