How AI Legal Research Actually Works (And Why Most Tools Get Citations Wrong)

Last updated on November 28, 2025

Legal AI research tools promise instant case law analysis and regulatory lookups. Many deliver impressive demos. Then production reveals a critical problem: fabricated citations, misattributed quotes, and confidently stated references to cases that don’t exist.

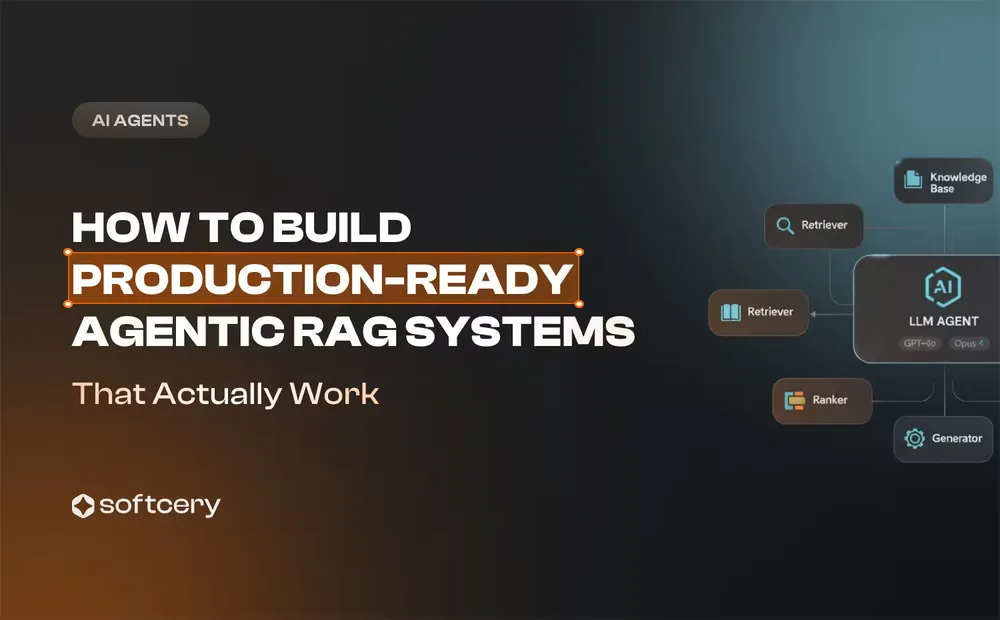

The issue isn’t that AI systems lack legal knowledge. Modern language models have absorbed millions of legal documents during training. The problem is architectural. Understanding why AI gets citations wrong and how reliable systems prevent these failures requires examining the technical architecture beneath the interface. This article explores RAG systems for legal research, the mechanisms behind citation hallucinations, validation layers that catch errors before they reach users, compliance-critical traceability requirements, and a case study showing how production systems implement these principles.

How AI Legal Research Works

AI legal research systems combine three distinct technologies: large language models for understanding queries and generating responses, retrieval systems for finding relevant legal documents, and validation architectures that verify accuracy before showing results to users.

How RAG Architecture Powers AI Legal Research

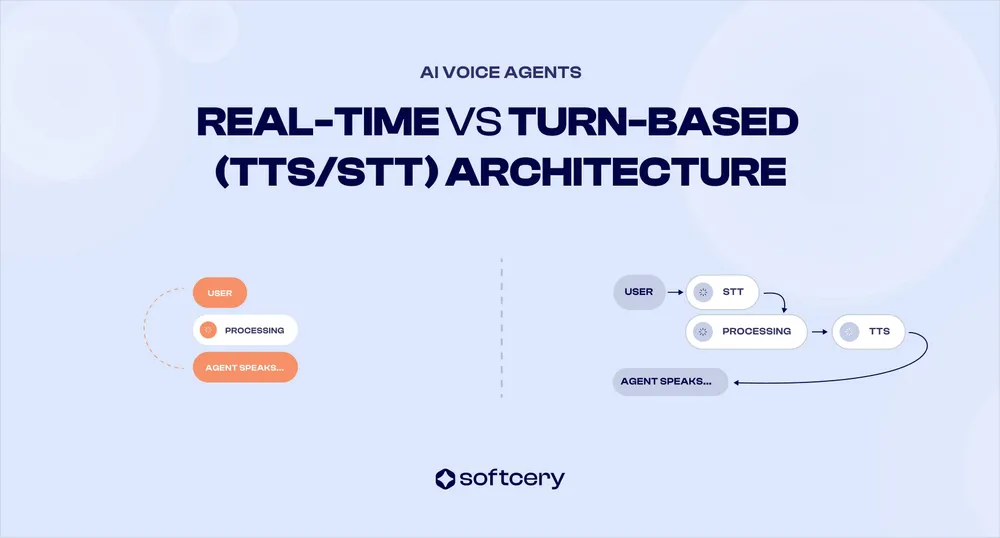

Retrieval-augmented generation (RAG) addresses the core limitation of pure language models: they generate text based on learned patterns but lack real-time access to specific documents. When an attorney asks about recent case law or jurisdiction-specific regulations, the model can’t search its training data for that exact information. RAG adds retrieval as a first step before generation.

The process begins with selecting content chunking. Legal documents must be broken into smaller chunks for embedding and retrieval. These chunks are preprocessed and embedded into vector representations using specialized models.

Once documents are chunked and embedded, the system handles queries through semantic search. When an attorney asks a question, the system converts that natural language question into a vector representation capturing semantic meaning. This query embedding enables similarity matching against the database of preprocessed legal document chunks.

Semantic search runs across the vector database to identify documents most relevant to the query. Unlike keyword matching, vector similarity finds cases discussing similar legal concepts even when exact terminology differs. A search for “employment discrimination based on pregnancy” retrieves relevant cases that discuss “adverse treatment of expectant mothers” or “bias against pregnant employees” because these concepts embed near each other in vector space.

The retrieval phase returns the top-k most relevant chunks (typically 5-20 passages from legal documents). These chunks become context for the generation phase. The language model receives the original query plus retrieved legal text and generates a response grounded in that specific material rather than relying solelry on general model knowledge.

This architecture solves the knowledge cutoff problem. New cases added to the vector database immediately become accessible without retraining the underlying language model. A ruling published yesterday can inform research today if the document gets indexed into the retrieval system.

Why AI for Legal Research Requires Document-Grounded Responses

Pure generative models hallucinate case citations with alarming regularity. When asked about precedent for a specific legal doctrine, models trained on legal text know the pattern: cite supporting cases with proper formatting, include court and year, reference relevant holdings. The model generates citations that look perfect but reference cases that don’t exist or misattribute holdings to real cases.

Hallucination occurs because language models learn statistical patterns, not factual databases. During training, the model observed thousands of citations following standard formats. Language model learned that a citation typically includes “v.” between parties, a court identifier, a year in parentheses, and a reporter citation. Given a prompt requiring case support, the model generates text matching that learned pattern without verifying whether that specific case actually decided that specific issue.

RAG constraints generation by requiring the model to work from retrieved documents. Instead of generating citations from learned patterns, the system must extract citations from actual legal text in the retrieved chunks. If the database contains the case, retrieval finds it, and generation references it accurately. If the case doesn’t exist in the database, the system can’t hallucinate it because generation is grounded in retrieved content.

AI Legal Research Accuracy: Document Chunking Challenges

RAG systems require breaking long legal documents into smaller chunks for embedding and retrieval. This chunking decision significantly affects research quality.

Fixed-size chunking (splitting every 500 tokens with 50-token overlap) breaks semantic units. A court opinion discussing a multi-part legal test gets fragmented across chunks. The first chunk contains elements 1-2 of the test, the second contains elements 3-4. When an attorney searches for the complete test, neither chunk fully answers the question. The system retrieves partial information and either presents an incomplete answer or attempts to synthesize across chunks, introducing error risk.

Section-aware chunking respects document structure. Legal opinions have predictable organization: procedural history, facts, discussion, holding. Chunking at section boundaries preserves complete legal reasoning units. When the system retrieves the “Discussion” section analyzing a specific doctrine, it captures the full analysis rather than an arbitrary mid-paragraph split.

Hierarchical chunking stores both small chunks for precise retrieval and larger parent chunks for context. Initial retrieval targets small, semantically focused chunks (individual paragraphs or subsections). Once the system identifies relevant chunks, it expands to retrieve the parent section or full case opinion.

Citation-aware chunking treats case citations as semantic units. When text references supporting precedent, the chunking algorithm keeps the citation with the sentence referencing it rather than splitting mid-citation, which preserves the connection between legal propositions and their supporting authorities.

Tables and structured information require special handling. Statutes often use nested numbering, provisions contain definitions referenced throughout, and regulations include complex conditional logic. Converting these to plain text loses critical structure. Advanced systems parse structured legal content into a format preserving logical relationships before embedding.

AI in Legal Research: Choosing the Right Embedding Models

Generic embedding models treat “plaintiff” and “defendant” as semantically similar because they frequently appear in similar contexts. For legal research, conflating these terms creates precision problems. Domain-specific embeddings understand legal semantics more accurately.

Fine-tuned legal embeddings learn from legal text corpora. Training on case law, statutes, regulations, and legal scholarship teaches the model relationships specific to legal reasoning. “Reasonable suspicion” and “probable cause” have distinct legal meanings despite similar everyday language. Fine-tuned embeddings capture this distinction because they’ve observed how courts use these terms differently.

Domain adaptation shows improvement concentrated on cases requiring precise legal terminology rather than broad conceptual matches. Domain-specific options like voyage-law-2 provide purpose-built legal embeddings trained on legal corpora, offering improved precision for legal research without requiring custom fine-tuning.

The cost tradeoff matters. Generic embeddings like OpenAI’s text-embedding-3-small cost $0.02 per million tokens and work reasonably well for general legal text. Fine-tuning open-source models like bge-m3 requires training data collection, compute resources for training, and ongoing maintenance as legal language evolves. For legal research systems handling high query volumes where precision directly impacts attorney efficiency, the investment in domain-specific or fine-tuned embeddings justifies the accuracy gain.

How AI Legal Research Uses Hybrid Search for Precision

Pure semantic search fails when attorneys need specific cases, statutes, or regulations identified by name, number, or citation. Vector similarity excels at conceptual matching but struggles with exact identifiers.

An attorney searching for “42 USC 1983” needs that specific statute, not conceptually similar civil rights laws. Vector search might return related provisions like 42 USC 1981 or 42 USC 1985 because they discuss similar concepts and embed near each other. For legal research, this conceptual similarity creates noise rather than value.

Keyword search (BM25 algorithm) handles exact matching reliably. It ranks documents by term frequency and document frequency, excelling at finding specific statutory citations, case names, or regulatory sections. When the query includes “Smith v. Jones,” BM25 retrieves documents containing that exact case name ranked by relevance.

Hybrid search runs both retrieval methods in parallel. Vector search finds conceptually relevant materials, BM25 finds exact matches, then a fusion algorithm combines results. Reciprocal Rank Fusion provides a simple, effective merging strategy that performs well without requiring tuned weights.

The implementation retrieves top-20 candidates from vector search and top-20 from BM25, merges using RRF scoring, then returns the top-10 combined results. This ensures that highly relevant semantic matches surface alongside exact statutory or case references.

Production legal research systems treat hybrid search as baseline requirement rather than optional enhancement. The failure mode of pure vector search (missing exact citations attorneys specifically request) creates unacceptable user experience and limits system utility for common legal research tasks.

Why AI Gets Legal Citations Wrong: The Hallucination Problem

Citation hallucinations stem from three architectural causes: training data patterns encouraging fabrication, insufficient validation between generation and output, and misaligned incentives in model optimization.

| Root Cause | How It Happens | Why It’s Dangerous | Prevention Strategy |

|---|---|---|---|

| Training Data Patterns | Models learn citation formats (case name, reporter, court, year) and density (citation every 2-3 sentences) from training data. Generate text matching these patterns without verifying citations exist. | Produces perfectly formatted citations to nonexistent cases. Low-quality training data with citation errors teaches model that plausible-sounding fabrications are valid. | RAG architecture constraining generation to retrieved documents. Extract citations from actual legal text in retrieved chunks rather than generating from patterns. |

| Generation Without Verification | Sequential token generation produces citations one word at a time based on probability. Common cases like Brown v. Board have high probability. Obscure cases have low probability, so model generates plausible-sounding alternatives. | No verification step checks whether generated citation references real case. Model proceeds to next sentence after successfully matching citation format pattern. | Post-generation citation extraction and database lookup. Query Westlaw, LexisNexis, or CourtListener to verify each citation exists before showing to users. |

| Misaligned Optimization | Training optimizes for fluency and coherence, not factual accuracy. Competing pressures: instruction-following says answer comprehensively, pattern-matching says include citations. Model resolves by generating citations when context lacks support. | Format correctness easy to verify during generation, but existence verification requires external lookup. RLHF focused on helpfulness rather than domain-specific citation accuracy. | Structured output formats separating verified citations from analysis. Attribution validation using secondary LLM call to confirm cited cases support propositions. |

Consider a query about employer liability for independent contractor misconduct. A system without validation might generate:

“Courts have established that employers bear vicarious liability for independent contractors in certain circumstances. Anderson v. TechCorp Industries, 847 F.3d 392 (7th Cir. 2019) held that the degree of control exercised determines liability. Similarly, Martinez v. Regional Services LLC, 623 F. Supp. 2d 441 (S.D.N.Y. 2018) extended this principle to digital platform workers.”

Both citations follow perfect Bluebook format. The legal reasoning sounds plausible. But neither case exists. The model generated them because the query pattern suggested citations should appear, and it learned to produce properly formatted references.

A grounded system working from retrieved documents produces:

“The general rule is that employers are not vicariously liable for torts of independent contractors. However, exceptions exist when the employer retains control over the manner of work performance. [Link to Restatement (Second) of Torts § 414]. Courts apply a multi-factor test examining the degree of control, method of payment, and whether the work is part of the employer’s regular business. [Link to relevant retrieved case law].”

The grounded response extracts legal principles from actual retrieved sources, links directly to those sources, and avoids citing cases that weren’t in the retrieval results.

The Training Data Pattern Problem

Language models learn that legal analysis includes citations. Training examples show arguments supported by case references, statutory citations backing legal propositions, and regulatory citations grounding compliance advice. The model internalizes this pattern: strong legal arguments cite supporting authority.

When prompted to answer a legal question, the model generates text matching learned patterns. If the training data shows that discussions of employment discrimination typically cite cases, the model generates employment discrimination analysis with citations. The model doesn’t verify whether those specific citations exist because verification isn’t part of the generation process.

The language model has seen thousands of valid citations during training and learned that citations follow specific style (Bluebook format for US legal writing) with typical citation density (legal analysis in training data included a citation every 2-3 sentences on average). Given a prompt requiring legal analysis, the model reproduces these learned patterns.

The issue compounds when training data includes low-quality legal writing with citation errors. If the training corpus contained articles misattributing holdings or briefs citing inapplicable cases, the model learned those errors as valid patterns.

Generation Without Verification

Standard language model generation produces one token at a time based on probability distributions over the vocabulary. When generating a case citation, the model predicts each word sequentially. Each prediction uses context from previous tokens and learned patterns from training.

This sequential generation lacks a verification step. After generating “Smith v. Jones, 123 F.3d 456 (9th Cir. 2020),” the model doesn’t check whether that citation references a real case. It proceeds to the next sentence, having successfully generated text matching the statistical pattern of legal citations.

The probability distribution driving generation reflects which citations appeared frequently in training data. Common cases like Brown v. Board of Education or Miranda v. Arizona have high probability because the model encountered them repeatedly. Obscure district court opinions have lower probability. When the model needs to cite a case addressing a niche issue, it often generates a plausible-sounding citation rather than retrieving the actual obscure case addressing that issue.

RAG systems theoretically prevent this by grounding generation in retrieved documents. The model should extract citations from retrieved case law rather than generating them freely. In practice, implementation details determine whether this constraint actually operates. If the prompt doesn’t explicitly instruct the model to cite only from provided context, or if the model’s instruction-following isn’t reliable, it may revert to generating citations from learned patterns.

Misaligned Optimization Objectives

Language models optimize for fluency and coherence, not factual accuracy. During training, the model learns to predict the next token given previous context. This objective rewards text that matches training distribution patterns. Legal citations in training data follow consistent formats, so the model learns to generate well-formatted citations. Accuracy (whether the cited case actually exists and says what’s attributed to it) isn’t part of the optimization objective.

Reinforcement learning from human feedback (RLHF) can improve factual accuracy if evaluators specifically reward accurate citations and penalize hallucinations. Many models receive RLHF focused on helpfulness, harmlessness, and general factuality but not domain-specific legal citation accuracy. Without explicit optimization for citation verification, models continue generating plausible-sounding fabrications because that behavior matches learned patterns from training data.

The model faces competing pressures during generation. Instruction-following suggests it should answer the user’s question comprehensively. Pattern-matching learned from training data suggests comprehensive legal analysis includes supporting citations. If the retrieved context lacks direct citation support for a point, but the model’s training data patterns indicate a citation should appear, the model resolves this tension by generating a citation. Fluency and format correctness are easy to verify during generation (does this look like a proper citation?), while existence verification requires external database lookup.

When AI Legal Research Accuracy Breaks Down Most

Citation hallucinations concentrate in predictable scenarios. When attorneys request research on niche legal issues with limited case law, retrieval may return only tangentially related cases. The model recognizes the retrieved context doesn’t directly support the specific legal proposition needed. Rather than stating insufficient authority exists, it generates citations matching the expected pattern.

Complex multi-jurisdictional queries create hallucination risk. An attorney asking about how different circuits have addressed an issue needs citations from multiple jurisdictions. If retrieval returns cases from only some of the requested jurisdictions, the model sometimes generates citations for missing jurisdictions to provide comprehensive coverage.

Recent developments pose particular risk. When attorneys research new legal issues or very recent cases, the retrieval database may lack relevant material if indexing lags. The model’s training data (typically with a cutoff date) also lacks current information. Faced with a query about legal developments after its knowledge cutoff, the model sometimes generates plausible-sounding recent citations because the query pattern suggests recent cases should exist.

Requests for specific case counts or aggregate statistics trigger fabrication. Questions like “how many circuit courts have adopted this standard?” require factual knowledge the model doesn’t possess unless explicitly provided in retrieved context. Rather than admitting uncertainty, models often generate specific numbers that sound plausible but lack factual basis.

Building Reliable AI Legal Research Systems

Legal AI engineering demands systematic approaches to reliability. No single technique eliminates hallucinations; reliable systems combine complementary approaches.

How to Prevent AI Citation Errors: Validation Layers

Post-generation validation extracts all citations from the AI’s response before showing it to users. A citation parser identifies references following legal citation formats and pulls party names, reporters, courts, dates, and page numbers into structured data. This extraction enables programmatic verification.

Database lookup checks whether each extracted citation exists. The system queries authoritative legal databases (Westlaw, LexisNexis, or open-source legal corpora like CourtListener) with the citation identifiers. A citation to “Smith v. Jones, 123 F.3d 456 (9th Cir. 2020)” gets verified by checking whether the 9th Circuit published an opinion at that reporter location in that year with those parties.

Quote verification goes beyond existence checking. When the AI attributes specific language or holdings to a case, the validation layer retrieves the full text of the cited case and confirms the attributed quote actually appears in that opinion. String matching handles direct quotes. Semantic similarity checking helps verify paraphrased holdings, though with lower confidence than exact matches.

Attribution validation ensures cited cases actually support the propositions they’re cited for. This requires more sophisticated checking than quote verification. The system evaluates whether the cited case discusses the relevant legal issue and whether the case supports or contradicts the proposition. This typically employs a secondary LLM call using the original proposition, the attributed citation, and the full case text, asking specifically whether the case supports the claim.

When validation fails, the system has several response options. Conservative implementations remove the problematic citation and either regenerate that portion of the response or mark it as unsupported. Aggressive implementations flag the entire response as unreliable and regenerate from scratch. The appropriate threshold depends on the use case: attorney-facing tools can surface uncertain citations with warnings, client-facing tools should suppress unverified material entirely.

Structured Output Constraints

Requiring structured output formats reduces hallucination risk by making validation programmatic. Instead of generating free-form legal analysis embedding citations throughout, the system generates JSON objects with separated fields for analysis and supporting citations.

A structured response might include fields for legal proposition, supporting case citations, statutory authority, and confidence scores. Each citation field requires structured data: party names, reporter, court, year. This structure enables automated validation of each component before assembling the final natural language response shown to users.

Structured generation also separates extraction from synthesis. The system first extracts relevant citations from retrieved legal documents, storing them as structured data with full metadata. During generation, it references these verified citations when building the response rather than generating citations as free text. This architecture makes it impossible to cite cases that weren’t in the retrieved set.

Tool-use patterns implement this separation cleanly. The language model calls a “search_cases” tool with specific legal concepts, receives structured results including case citations and relevant excerpts, then generates analysis referencing the verified cases returned by the tool. Citations become function call results rather than generated text.

Improving AI Legal Research Accuracy Through Grounding Detection

Even with retrieved context, models sometimes generate claims not supported by the provided documents. Grounding detection evaluates whether each sentence in the generated response has support in the retrieved chunks.

Sentence-level grounding analysis breaks the response into individual claims and evaluates each against retrieved context. For each claim, the system searches retrieved chunks for supporting evidence. If support exists, the claim is grounded. If support is absent, the claim is potentially fabricated.

LLM-as-judge approaches use a second model call to evaluate grounding. The prompt provides retrieved context, the generated claim, and asks: “Is this claim directly supported by the provided context? Answer Yes/No with explanation.” This catches subtle ungrounded statements that string matching would miss.

Strict grounding enforcement rejects responses where significant portions lack support in retrieved context. If more than 10-15% of claims can’t be grounded to retrieved documents, the system either regenerates with stronger grounding instructions or returns a response explicitly noting information limitations.

Citation density analysis detects likely hallucinations by comparing citation patterns. Responses with long passages of legal analysis without citations deviate from typical legal writing patterns and suggest the model generated claims without grounding them in specific authorities. While not definitive (some legal analysis legitimately proceeds without constant citation), unusual citation patterns trigger additional scrutiny.

Compliance-Critical Requirements

A comprehensive legal AI roadmap must account for traceability, source grounding, and jurisdiction-specific accuracy as foundational components, not afterthoughts.

Traceability and Audit TrailsEvery research response needs complete provenance tracking. When an attorney relies on AI research for a brief or legal advice, audit trails must show which documents the system retrieved, what context went to the generation model, what the raw model output contained, which validation steps ran, and what the final verified output included.

This traceability serves multiple purposes. If opposing counsel challenges a legal proposition, the attorney must verify the AI’s research. Complete audit trails enable reconstruction of the research process. If a case citation proves incorrect, the firm needs to understand whether the error originated in retrieval, generation, or validation to prevent recurrence.

Regulatory compliance often requires demonstrating due diligence in research processes. Bar association ethics opinions on AI use emphasize attorney responsibility for verifying AI output. Comprehensive audit trails demonstrate systematic verification processes rather than blind reliance on AI suggestions.

Implementation requires logging at each pipeline stage. Retrieval logging captures the query, retrieval parameters, returned chunks, and relevance scores. Generation logging includes the full prompt with retrieved context, model parameters (temperature, top-p), and raw output before post-processing. Validation logging records which checks ran, what they found, and which content got modified or flagged.

Source Document GroundingProduction-ready legal AI system should never present information without clear source attribution. Every legal proposition needs linked references to supporting cases, statutes, or regulations. This requirement goes beyond citations embedded in narrative text.

Inline citations with direct links enable immediate verification. When the system states a legal standard, the citation isn’t just text (e.g., “See Smith v. Jones, 123 F.3d 456”) but a hyperlink directly to the case in an authoritative database. Attorneys verify claims with a single click rather than manually searching for cited authorities.

Contextual source highlighting shows exactly which passage in a source document supports each claim. Rather than citing an entire 50-page opinion, the system links to the specific paragraph containing the relevant analysis. This granular source grounding enables rapid verification and builds user trust by demonstrating precise evidence.

Confidence scores per source help attorneys allocate verification effort. When the system cites a case with 95% confidence (strong semantic match between claim and source text, quote verified, case directly on point), less manual checking is needed. Citations with 70% confidence (conceptually related but not directly on point) trigger closer attorney review.

Multi-source triangulation increases reliability. When multiple independent sources support the same legal proposition, confidence appropriately increases. The system might cite three circuit court opinions adopting the same standard, showing convergence across jurisdictions rather than reliance on a single case.

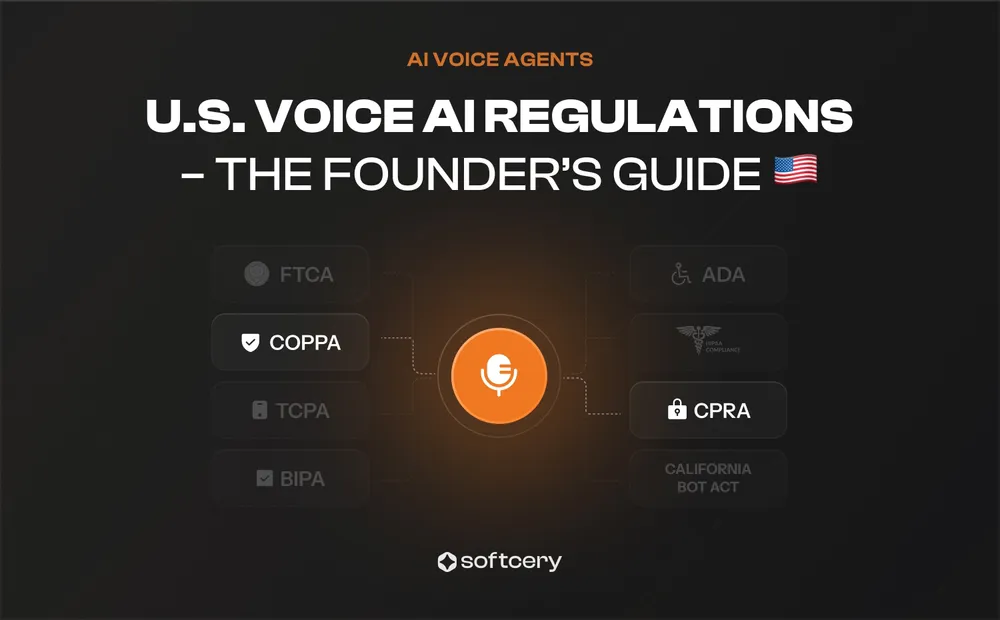

Jurisdiction-Specific AccuracyLegal research must respect jurisdictional boundaries. Federal law differs from state law, state laws differ from each other, and international legal systems operate on entirely different principles. Systems that blend jurisdictions produce dangerous results.

Jurisdiction-aware retrieval filters source documents by applicable legal regime before searching. When an attorney specifies New York employment law as the research domain, the retrieval system excludes California case law and federal decisions on unrelated matters. This prevents contamination where the system cites inapplicable authority because it’s conceptually similar.

Metadata tagging during document indexing captures jurisdiction, court level, decision date, and precedential status. These become filter parameters during retrieval. The system can constrain searches to binding precedent (excluding persuasive authority from other jurisdictions) or expand to comparative law research when appropriate.

Conflict detection identifies when different jurisdictions take inconsistent approaches. If an attorney’s query could implicate multiple jurisdictions, the system should flag jurisdictional splits rather than presenting one approach as universal. A query about non-compete enforceability should note that California largely prohibits these agreements while other states enforce them under reasonableness standards.

Jurisdiction verification during validation checks whether cited cases actually apply in the relevant legal context. A citation to 9th Circuit precedent in response to a question about 5th Circuit law should trigger warnings unless the attorney specifically requested persuasive authority from other circuits.

For technical guidance on legal AI implementation, reach out at hey@softcery.com or book a call.

Case Study: How AI Legal Research Works in Production

The validation layers, grounding detection, and compliance requirements described above might sound theoretical. Here is how these architectural decisions look in a real production system. Softcery built a compliance Q&A platform that handles regulatory questions across New Zealand and Australian jurisdictions with full citation tracking and verification.

Multi-Jurisdiction Architecture

The system maintains completely separate vector databases for New Zealand and Australian legal content. This architectural separation prevents jurisdictional contamination at the infrastructure level rather than relying on metadata filtering.

The category-aware retrieval prevents guidance documents from overshadowing binding law. Pure semantic similarity might rank a detailed guidance document higher than a terse statutory provision because the guidance uses more words and embeds more richly. Legal hierarchy constraints override pure similarity scoring to surface authoritative sources first.

Source Grounding Implementation

Relevance filtering detects questions outside the system’s knowledge domain. When a query asks about criminal law but the database contains only regulatory compliance materials, semantic similarity might still return marginally related documents. Confidence scoring identifies when top retrieval results have low similarity scores, triggering “I don’t have information about that in my knowledge base” responses rather than attempting to answer from tangentially related materials.

Context-aware query rewriting handles follow-up questions in conversation. When a user asks “What about contractors?” as a follow-up, the system doesn’t search for “contractors” in isolation. It reformulates the query using conversation context: “What are the compliance obligations regarding contractors in the context of [previous topic]?” This reformulation preserves intent while enabling semantic search to find relevant materials.

Validation Architecture

Post-generation validation checks each response before display. The validation layer extracts claims from the generated text and evaluates whether each claim has support in the retrieved source chunks.

Structured output validation confirms the response includes required elements. Answers must have citations, citations must include document links, and substantive responses must exceed minimum length thresholds (preventing “yes/no” answers where detailed analysis is appropriate).

Grounding verification operates sentence-by-sentence. For each claim in the response, the system performs similarity search against retrieved chunks to find supporting evidence. Claims without support get flagged for regeneration or removal.

Conclusion

Legal AI research systems that work in production differ fundamentally from impressive demos. Reliable systems ground every claim in verified source documents, validate citations against authoritative legal databases before display, maintain strict jurisdiction boundaries preventing inapplicable law from contaminating results, and provide complete audit trails showing exactly how each response was generated.

The citation hallucination problem stems from architectural choices, not inherent AI limitations. Systems generating citations as natural language inevitably fabricate. Systems extracting citations from verified source documents through structured retrieval pipelines maintain accuracy.

Compliance-critical legal applications demand infrastructure treating verification and traceability as core requirements rather than optional enhancements. Source grounding, validation layers, and audit logging must be built into the system architecture from the beginning.

The real-life case study from Softcery demonstrates that production-ready legal research systems are achievable with current technology. The requirements include multi-jurisdiction knowledge separation, citation verification before output, grounding detection catching unsupported claims, and organization-level data isolation protecting confidentiality. These are engineering challenges with proven solutions rather than fundamental research problems.

Legal AI research tools will continue improving as embedding models become more legally sophisticated, validation techniques become more reliable, and retrieval architectures better preserve document context. The foundational requirement remains constant: systems must verify what they claim before attorneys rely on that research.

Frequently Asked Questions

AI systems generate citations based on statistical patterns learned during training rather than verifying references against legal databases. Language models observed thousands of properly formatted case citations in training data and learned the structure: party names, reporter volumes, court identifiers, years. When prompted to support legal arguments, models generate text matching these learned patterns without checking whether the cited cases actually exist or say what’s attributed to them. This produces perfectly formatted citations referencing nonexistent cases. RAG systems prevent this by constraining generation to cite only from retrieved legal documents, but implementation quality determines whether this constraint actually operates.

Accuracy depends entirely on architecture. Systems using pure language model generation without verification produce citation hallucinations at significant rates, generating references to nonexistent cases or misattributing holdings. Production systems using RAG with post-generation validation achieve substantially higher citation accuracy by grounding all claims in verified source documents, validating citations against legal databases before display, and implementing multi-layer checking between generation and output. The accuracy gap stems from engineering choices about verification architecture rather than fundamental AI capabilities. Legal research systems without explicit validation infrastructure should not be trusted for professional use.

Production-ready legal research systems implement multiple validation layers operating between generation and display. Post-generation citation extraction pulls all case references into structured data for programmatic checking. Database lookups verify each citation exists in authoritative legal databases. Quote verification confirms attributed language actually appears in cited cases. Grounding detection evaluates whether claims have support in retrieved source documents. Structured output formats separate verified citations from analysis, making validation programmatic. When validation fails, responses get regenerated with stricter grounding requirements or flagged for human review. Systems also implement relevance filtering to detect out-of-domain queries and explicitly decline to answer rather than generating from tangentially related materials.

Legal research demands absolute accuracy where most AI applications tolerate probabilistic outputs. A single fabricated case citation creates malpractice exposure and professional responsibility violations. Legal research also requires jurisdiction-specific precision because laws vary by state, circuit, and country. Systems that blend jurisdictions produce dangerous results by citing inapplicable authority. Traceability requirements exceed other domains since attorneys must verify AI research and demonstrate due diligence for bar compliance. Source grounding must be granular (linking specific claims to specific passages, not just full documents) to enable rapid verification. Finally, legal research handles adversarial scenarios where accuracy matters most: opposing counsel will check every citation, courts will evaluate supporting authority, and compliance violations trigger regulatory consequences.

Multi-jurisdiction systems work when architecture maintains strict separation between legal regimes. Upskill AI demonstrates this through completely separate vector databases for New Zealand and Australian content, preventing jurisdictional contamination at infrastructure level. Queries route exclusively to the applicable jurisdiction’s database. Document metadata captures jurisdiction, court hierarchy, and effective dates enabling filtered retrieval. The system can also detect jurisdictional splits (when different jurisdictions take conflicting approaches to the same issue) and surface these differences rather than presenting one approach as universal. Systems that rely on metadata filtering rather than database separation face higher contamination risk where conceptually similar but jurisdictionally inapplicable authorities leak into results.