Choosing an LLM for Voice Agents: Speed, Accuracy, Cost

Last updated on April 24, 2026

With a growing number of LLMs available – ranging from proprietary models like OpenAI’s GPT-5.4 and Anthropic Claude Sonnet 4.6 to open-weight alternatives such as Meta’s Llama 4 Maverick, DeepSeek V4-Flash, and GLM-4.6 – businesses must carefully evaluate their options. Factors like response latency, throughput, cost per token, hosting flexibility, prompt caching support, and reasoning-mode tradeoffs all play a crucial role in determining the best-fit model for a given voice use case.

What Are Large Language Models (LLMs), and Why Are They Important for Voice AI?

Large Language Models (LLMs) are advanced neural networks trained on massive amounts of text data, enabling them to process, understand, and generate human-like responses in natural language. These models leverage deep learning architectures, such as transformers, to predict text based on input prompts, making them incredibly versatile for various AI-driven applications.

In the context of voice AI, LLMs play a fundamental role in ensuring smooth, intelligent, and context-aware conversations. Unlike traditional voice assistants that rely on predefined scripts or rigid rule-based systems, LLM-powered AI voice agents can:

- Comprehend context and intent;

- Generate human-like responses;

- Follow complex instructions;

- Handle dynamic, real-time interactions;

- Support multilingual communication.

Why Are LLMs Critical for Voice AI?

AI voice agents must process and generate responses within milliseconds to maintain a seamless real-time conversation. TTFT (Time to First Token) measures how long it takes for an AI model to generate the first symbol of its response after receiving a query. MMLU (Massive Multitask Language Understanding) is a benchmark that evaluates an AI model’s ability to understand and answer complex questions across multiple subjects, including math, law, medicine, and general knowledge.

The choice of LLM directly impacts:

- Response speed (latency) – Faster models like Grok 4.1 Fast (0.59s TTFT) and Claude Haiku 4.5 (0.70s TTFT) allow near-instant interactions. Reasoning (“thinking”) modes on flagship models add 8–200 seconds and are voice-unviable.

- Accuracy and coherence – A high MMLU-Pro score (e.g., ~86% for Claude Sonnet 4.6) ensures the model can handle complex queries with logical consistency.

- Cost-effectiveness – Businesses processing millions of voice interactions monthly benefit from cost-efficient models like Grok 4.1 Fast ($0.20 input / $0.50 output per 1M tokens) and Gemini 3.1 Flash-Lite ($0.25 / $1.50) vs. Claude Sonnet 4.6 ($3.00 / $15.00). Prompt caching cuts repeated system-prompt cost to ~10% of base across major providers.

Now that we’ve covered the role of LLMs, let’s put it into context.

Key Challenges in Selecting an LLM for AI Voice Agents and Their Business Impact

Choosing the right large language model (LLM) for an AI voice agent is a strategic decision that directly affects customer experience, operational costs, and scalability. Unlike traditional chatbots, voice agents require real-time processing, seamless dialogue management, and accurate responses, making the selection process complex. Below, we explore the most critical challenges and their direct impact on business operations.

Demystifying LLM Selection: The Key Metrics That Matter

Latency & Performance Metrics

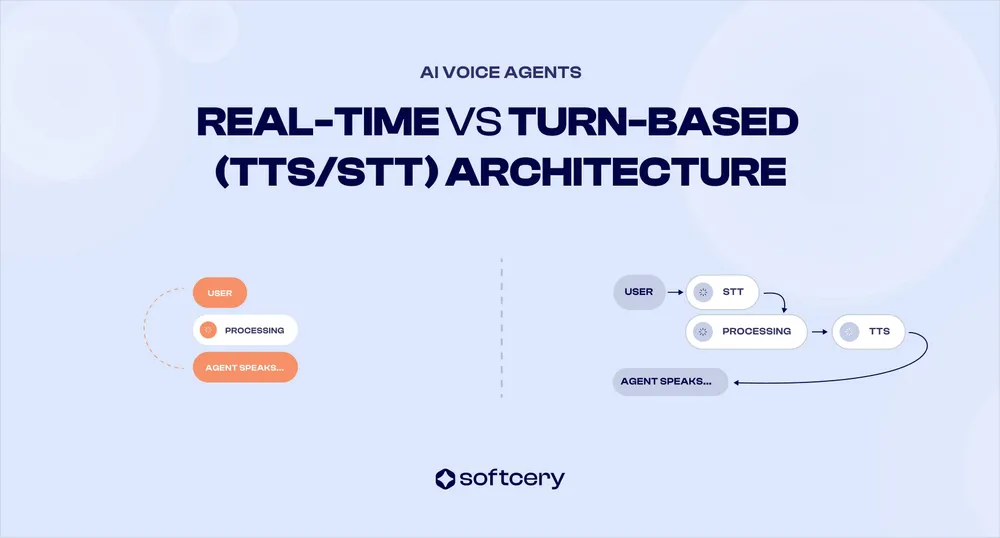

For voice assistants, responsiveness is critical. Latency directly affects the conversational flow: a slow response can feel unnatural or frustrating to users. We focus on Time to First Token (TTFT) and Tokens per Second (TPS) (generation throughput). All the models support streaming output, meaning they can start speaking before the full answer is generated, which is essential for real-time voice.

Critical voice constraint: every flagship model in 2026 ships with a “reasoning” or “thinking” toggle. Reasoning mode produces better answers but TTFT climbs from the ~0.6–2 second range (non-reasoning / minimal effort) to roughly 8–200 seconds, which makes it unusable for real-time voice turns. The table below uses non-reasoning (or minimal-effort) variants where applicable. Latency benchmarks come from Artificial Analysis measurements as of April 2026.

| Model | TTFT (seconds) | Throughput (TPS) | Notes on Real-Time Behavior |

|---|---|---|---|

| Grok 4.1 Fast (non-reasoning) | 0.59 | 135 | Best-price 2M-context model; TTFT under most flagships at fraction of cost. |

| GPT-5.4 nano (minimal effort) | ~1.14 | 146 | Cheapest OpenAI; minimal reasoning effort for voice; medium effort TTFT climbs to ~3.13s. |

| Claude Haiku 4.5 | 0.70 | 97 | Released October 15, 2025; balances latency and quality at $1/$5 per 1M. |

| Kimi K2.6 | 1.04 (0.72 on Fireworks) | — | Strong agentic / function calling; intelligence above K2 0905 generation. |

| Claude Sonnet 4.6 (non-reasoning) | 1.36 | 43 | High accuracy when reasoning toggle is off; reasoning variant TTFT ~135s (voice-unsuitable). |

| Gemini 3 Flash Preview (non-reasoning) | ~1.35 | 161 | High intelligence; non-reasoning mode suitable for voice. |

| DeepSeek V4-Flash (non-reasoning) | ~2.0 | 27–33 (197 on Vertex) | Replaces V3.2-Exp as deepseek-chat default; cheap chat-optimized variant. |

| Llama 4 Maverick (Fireworks/Groq) | 0.4–0.5 high | high | Open-weight; Groq deprecated March 9, 2026, but Fireworks/Together still serve. |

| Gemini 3.1 Flash-Lite Preview | ~5.2 (AA) | 314 | Highest TPS in class; cheapest Tier-1 model; implicit caching free. |

| GPT-5.4 mini (minimal effort) | varies | 163 | Mid-tier OpenAI; minimal reasoning effort for voice; medium effort TTFT ~8.24s. |

| GPT-5.4 (minimal effort) | ~1.1 | 77 | Flagship; minimal effort suitable for voice; high effort reaches ~166s and is offline-only. |

Business Impact of Latency

In real-world deployments, lower latency has direct benefits for user engagement and efficiency. Users are more likely to continue interacting when responses are prompt - a delay beyond about one second can start to feel awkward, and indeed studies show that delays over 1 second can frustrate users in voice interactions. Especially in customer-facing scenarios (e.g. a support hotline), shaving even a second off response times can yield measurable improvements in satisfaction. For instance, McKinsey industry report found that a one-minute increase in average call handle time leads to a 10% drop in customer satisfaction scores.

While our focus is on seconds or fractions of a second per response, it all adds up: when AI agents respond in seconds or fractions of a second, they resolve queries faster, which shortens call times and reduces customer wait times. Faster responses also lower operating costs: if an AI agent works 10–20% faster due to low latency, it can handle more calls or free up human agents sooner, improving overall contact center efficiency.

Accuracy & Coherence Metrics

Accuracy evaluation for voice-agent use cases relies on a mix of general-reasoning benchmarks and agentic / tool-use benchmarks. The most relevant in April 2026:

- MMLU-Pro evaluates the model on a harder, more discriminating expansion of the original MMLU 57-subject test. Higher MMLU-Pro (%) means stronger broad world knowledge.

- GPQA (Graduate-Level Google-Proof Q&A) presents extremely challenging questions (often college or grad-level problems in sciences) that aren’t easily solved by memorization or a quick web search.

- BFCL v3 (Berkeley Function Calling Leaderboard) measures real-world function-calling reliability – directly relevant for voice agents that book appointments, query CRMs, or trigger workflows.

- τ-bench / τ²-bench / τ³-bench (Sierra benchmarks) measure tool-agent-user interaction quality. The 2026 update added voice full-duplex support (τ-Voice), making it a natural fit for voice agent selection.

- VoiceAgentBench (arXiv 2510.07978) is a voice-native evaluation suite that emerged in 2026 specifically for voice agent reasoning and tool use.

| Model | MMLU-Pro (%) / Intelligence | GPQA (%) | BFCL v3 / Notes |

|---|---|---|---|

| Claude Sonnet 4.6 | ~86 (MMLU-Pro) | high | Strong instruction following; SWE-bench 79.6%; excellent for complex AI agents. |

| GPT-5.4 (flagship) | Intelligence Index 64+ | 84 | MMLU-Pro 83.1%; flagship reasoning; reserve for non-voice agent steps. |

| Gemini 3 Flash | Intelligence Index 46 | high | Strong reasoning; voice-suitable in non-reasoning mode only. |

| Kimi K2.6 | above K2.5 | high | Strong agentic capabilities; open-weight; good function calling. |

| Claude Haiku 4.5 | Intelligence Index 31 | medium | Released October 15, 2025; high-volume production sweet spot. |

| Gemini 3.1 Flash-Lite | Intelligence Index 34 | medium | Cheapest Tier-1; excellent for high-volume voice routing. |

| GPT-5.4 mini | Intelligence Index 49 | medium-high | Cost-effective; non-reasoning mode for voice. |

| GPT-5.4 nano | Intelligence Index 24 | low-medium | Cheapest OpenAI; sub-agent / classification ideal. |

| DeepSeek V4-Flash (non-reasoning) | competitive | medium | Very low cost; chat-tuned; cache-hit pricing $0.028/1M. |

| Grok 4.1 Fast | strong for tier | medium | 2M context at $0.20/$0.50; best-price large context. |

| Llama 4 Maverick | behind frontier | medium | Open-weight; suitable for self-hosted voice at scale. |

| GLM-4.6 / GLM-5 | Quality Index 49.64 (open SOTA) | high | z.AI open-weight; backbone for Ultravox v0.7; comparable to Claude Opus 4.6. |

The most reliable models for accuracy-first voice deployments – Claude Sonnet 4.6, GPT-5.4, and Gemini 3 Flash – score among the highest available benchmarks. Reserve their reasoning modes for non-voice agent steps (planning, summarization, post-call analysis) where 5–200+ second TTFT is acceptable. Use their non-reasoning variants for the user-facing voice turn, and use cheaper fast models (Haiku 4.5, GPT-5.4 nano, Grok 4.1 Fast, Gemini 3.1 Flash-Lite) for routing and high-volume work.

Business Impact: Why Accuracy Matters

- Finance: Mistakes in AI-generated advice on loans, interest rates, or transactions can lead to compliance issues and financial losses. Banks typically use high-accuracy models and validate responses with real-time data sources or human review.

- Healthcare: AI in medical support must be highly reliable. Even the best models (~80% accuracy) can still make errors, so they should be used to assist, not replace human professionals. A voice agent might draft an answer, but a curated medical database or human expert should verify before providing final information.

Cost Analysis

Pricing per Million Tokens

Each model has different pricing, especially the proprietary ones offered via API. The table below summarizes the API usage costs (in USD per 1 million tokens processed). “Input” refers to prompt tokens and “output” refers to generated tokens. For reference, 1 million tokens is roughly 750k words (about 3,000-4,000 pages of text).

| Model | Context Window | API Price (per 1M tokens) | Notes |

|---|---|---|---|

| Grok 4.1 Fast | 2M | $0.20 (input), $0.50 (output) | Best-price 2M context; xAI; strong for long voice sessions. |

| GPT-5.4 nano | 400k | $0.20 (input), $1.25 (output) | Cheapest OpenAI; 10% cached input; classification / sub-agent ideal. |

| Gemini 3.1 Flash-Lite Preview | 1M | $0.25 (input), $1.50 (output) | Cheapest Tier-1; implicit caching free, explicit 75% off. |

| DeepSeek V4-Flash | 128k+ | $0.14 (input), $0.28 (output) | Replaces V3.2-Exp; cache-hit pricing ~90% off; very low all-in cost. |

| Gemini 3 Flash Preview | 1M | $0.50 (input), $3.00 (output) | Reasoning toggle; non-reasoning mode for voice; high TPS. |

| GPT-5.4 mini | 400k | $0.75 (input), $4.50 (output) | Mid-tier OpenAI; non-reasoning for voice. |

| Kimi K2.6 | 256k | $0.95 (input), $4.00 (output) | Open-weight; strong agentic / function calling. |

| Claude Haiku 4.5 | 200k | $1.00 (input), $5.00 (output) | Cache read 10%; 1h TTL 2× write; released October 15, 2025. |

| GPT-5.4 (flagship) | 1M | $2.50 (input), $15.00 (output) | Replaces GPT-4o/GPT-4.1; flagship model with adjustable reasoning effort. |

| Claude Sonnet 4.6 | 1M | $3.00 (input), $15.00 (output) | Strong reasoning; reasoning mode TTFT 134s (voice-unsuitable). |

| Claude Opus 4.7 | 1M | $5.00 (input), $25.00 (output) | Released April 16, 2026; voice-unsuitable due to latency; reserve for offline steps. |

| Llama 4 Maverick (Fireworks) | 1M | $0.07–$0.90 range (provider-dependent) | Open-weight; Groq deprecated March 9, 2026; Fireworks/Together still serve. |

Reasoning Mode: Voice-Unviable

Every flagship LLM in 2026 ships with a “reasoning” or “thinking” toggle that allocates extra compute before producing the first token. Reasoning produces meaningfully better answers on hard tasks, but TTFT explodes:

- Claude Sonnet 4.6 – non-reasoning TTFT 1.36s, reasoning TTFT ~135s

- GPT-5.4 – high-effort reasoning TTFT ~166s on Artificial Analysis

- Gemini 3 Flash Preview – non-reasoning TTFT ~1.35s; reasoning TTFT higher, voice-unsuitable

- GPT-5.4 mini – medium-effort reasoning TTFT ~8.24s; minimal effort suitable for voice

For voice agents, treat reasoning as off by default for the user-facing turn. Use it only on offline agent steps (planning, summarization, post-call analysis) where the latency cost is acceptable. Provider-specific controls: Anthropic’s extended thinking budget, OpenAI’s reasoning effort (minimal/low/medium/high), Gemini’s thinkingBudget / minimal mode.

Prompt Caching Math (Voice-Critical)

Voice agents replay the same system prompt every turn. Without caching, those tokens are re-charged each time. With caching, providers charge a one-time write premium and then read at ~10% of base price for the cache TTL window.

Provider policies as of April 2026:

- Anthropic: 5-minute TTL cache write 1.25× base, 1-hour TTL write 2× base, read 0.1× base. The default reverted from 1h back to 5m in March 2026 – set TTL explicitly when constructing requests.

- Gemini: Implicit caching applies automatically with no code change and zero cost (1,024-token minimum on Flash, 2,048 on Pro). Explicit caching offers a 75% discount on Gemini 2.5+ models with a 32,768-token minimum.

- OpenAI: Cached input billed at 10% of standard input price; no API change required.

- DeepSeek: Cache hit roughly 90% off miss price (e.g., V4-Flash ~$0.014 hit vs. $0.14 miss).

Break-even on a cache write usually arrives after 3–4 cache hits within the TTL window. For a voice agent with a 5K-token system prompt and 30-second turn rate, caching reduces system-prompt cost by roughly 90% across a typical 10-minute call.

Voice Latency Budget

Roughly 70% of voice agent latency comes from LLM inference. A workable target budget for sub-1-second perceived latency:

- VAD: ~50ms

- STT: ~150ms

- LLM TTFT: ~400ms (this is the squeeze point)

- TTS: ~150ms

- Network round-trip: ~50ms

- Total: ~800ms

That budget is achievable only with non-reasoning LLM variants and well-tuned streaming.

Context Window Impact or Input Length

The context window determines how much conversation history or documents the model can consider at once. Current voice-suitable models sit between 128k (DeepSeek V4-Flash) and 2M tokens (Grok 4.1 Fast), with most at 1M. Larger context enables sophisticated use cases (feeding entire knowledge bases, long dialogs, persistent user preferences) but increases per-turn cost whenever the model re-reads the full context. Prompt caching mitigates most of that cost, as covered above. For a typical customer-support call (10–30 minutes, a few thousand tokens of dialog), any current voice-suitable model has more than enough context; context-window choice becomes a factor only when stuffing large knowledge bases, long multi-session memory, or document attachments into every turn.

API vs. Self-Hosting: Which is More Cost-Effective?

Using a managed API (OpenAI, Anthropic, Google, etc.) means paying per token, which is easy to manage and automatically scales with usage. Self-hosting an LLM means running it on your own servers or cloud machines, avoiding token fees but paying for the infrastructure instead. The cost trade-off depends on usage volume:

- For low to moderate usage, APIs are often cheaper and easier (you don’t pay for idle time, and don’t need MLOps engineers to maintain the model). There’s also no large up-front investment.

- For very high usage, self-hosting can save money in the long run. But the general point stands: at large scale, owning the means of generation can be more cost-efficient.

There’s a middle ground: managed cloud services like AWS Bedrock (offers pay-per-token access to Claude, Llama, Nova, and other models) or spinning up your own instances on AWS/GCP/Lambda/RunPod to self-host open-weight models like Llama 4 Maverick, DeepSeek V4-Flash, Kimi K2.6, Qwen 3.5, or GLM-4.6. With Bedrock, the convenience of pay-per-use pricing comes with enterprise features like VPC integration and data residency controls. For self-hosting, the math depends on GPU rental rates and utilization. April 2026 GPU pricing:

- H100: $1.49–$2.99/hr on specialist providers (Lambda, RunPod, CoreWeave); $3–$4/hr on AWS/GCP

- B200: from ~$2.65/hr reserved up to ~$3.79/hr on-demand on specialist providers

- GB200: reservation-only on most providers; availability expanding through 2026

Keep GPUs busy close to 24/7 and the effective token cost can approach the theoretical hardware cost. With idle time, usage-based API pricing usually wins.

Another consideration is rate limits and scaling. API providers have request quotas. OpenAI’s enterprise tier on GPT-5.4 supports 10,000+ requests per minute and tens of millions of tokens per minute, but a large call center needs to negotiate enterprise quotas explicitly.

Use our AI voice agent calculator to get a clear monthly estimate based on your model, usage, and setup.

Deployment Factors: Cloud vs. Self-Hosted, Security & Scalability

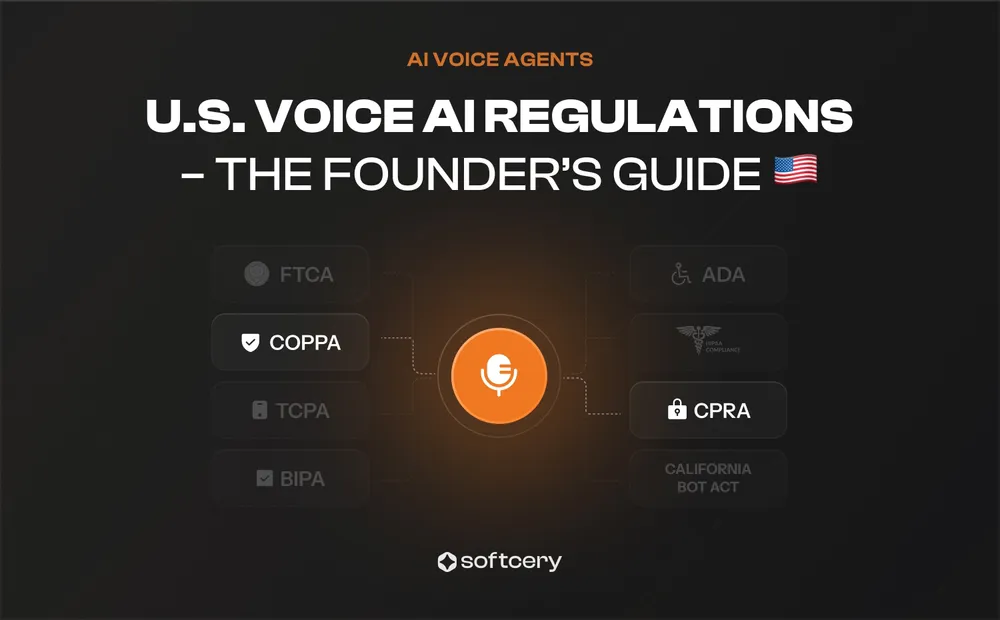

Selecting an LLM for enterprise deployment involves more than just model quality; infrastructure, data security, compliance, and scalability are equally critical considerations.

Cloud-based APIs (OpenAI, Anthropic, Google, etc.):

Pros: Easiest to integrate (simple API calls), no ML ops burden, and providers optimize the model’s performance for you. They also handle scaling. If your voice agent’s call volume spikes, the cloud service can accommodate (within your rate limit) by allocating more compute. Updates and improvements to the model are delivered automatically.

Cons: Ongoing cost per use, potential data privacy concerns (since user queries are sent to a third-party server), and dependence on the provider’s uptime and policies. While major providers have strong security, some organisations are uneasy sending sensitive data off-site. Compliance requirements can be a barrier - for example, a healthcare company may be legally restricted from using a cloud AI unless certain certifications are in place. There’s also less flexibility: you can’t customize the model beyond what the API allows.

Self-hosting (on-prem or private cloud):

Pros: Full control over data (nothing leaves your servers, which aids privacy and regulatory compliance), and potentially lower marginal cost at scale as discussed. You can also customize the stack - for instance, run real-time voice ASR (speech recognition) and the LLM on the same machine to minimize latency, or fine-tune the model on proprietary data. It also allows using open-source models that aren’t available via API. Data residency and sovereignty concerns are alleviated since you decide where the system runs (important for EU GDPR, which requires controlling cross-border data flow; self-hosting lets you keep data in-country).

Cons: The team now owns operations and security. An open-source model server, like any sensitive system, can leak data or face attacks if it’s not set up securely. Maintaining uptime, applying model updates, and scaling the system require skilled engineers. Hardware cost and maintenance add up – running a fleet of GPUs or specialized AI accelerators has a meaningful monthly bill. If usage is sporadic or low-volume, those resources sit idle while still costing money. The quality gap to top proprietary models has narrowed dramatically: GLM-4.6 and GLM-5 from z.AI now lead open-source benchmarks (Quality Index 49.64, comparable to Claude Opus 4.6), DeepSeek V4-Flash matches mid-tier proprietary chat quality, and Llama 4 Maverick competes on latency for self-hosted voice. For most voice use cases in 2026, the gap is no longer a blocker – operations complexity is.

Security & Compliance

All major cloud LLM providers have taken steps to alleviate data privacy concerns. OpenAI, Google, and Anthropic state that API data is not used to train their models (unlike consumer-facing free services). OpenAI even offers a “zero data retention” mode for enterprises where they don’t store API prompts at all. Microsoft Azure OpenAI service will sign a BAA (Business Associate Agreement) for HIPAA compliance in healthcare and ensures data is siloed to specific regions. These measures mean using a closed model via API can meet strict requirements, but it relies on trusting the vendor and legal safeguards. Some organizations, especially in finance and government, still prefer that sensitive data never leaves their own infrastructure - hence a tilt toward open-source models they can deploy internally.

Scalability

Cloud APIs abstract this – teams only need to watch their rate limits. For high-throughput scenarios, request higher quotas or move to an enterprise tier. Self-hosting requires scaling out infrastructure. The good news is LLM workloads scale horizontally, so if you need to handle N concurrent calls, you can run N (or fewer, if each can handle multiple threads) instances of the model. Tools like Kubernetes or auto-scaling groups in cloud can spin up more instances when load increases. The latency difference is that cloud API calls might go to geographically load-balanced servers, whereas if you self-host in one region, global users might experience more network latency (unless you deploy servers in multiple regions). For a voice agent, this is usually minor compared to generation time.

Fine-tuning and Customisation

Many providers now allow limited fine-tuning of their models. For example, OpenAI offers fine-tuning on the current GPT-5.4 family (the GPT-4.1 family was retired from ChatGPT on February 13, 2026; API access sunsets on a rolling schedule). Anthropic still does not allow direct fine-tuning of Claude Sonnet 4.6 or Claude Haiku 4.5, but AWS Bedrock supports fine-tuning select models including Claude (with guardrails). Open-weight models like Llama 4 Maverick, DeepSeek V4-Flash, Kimi K2.6, and GLM-4.6 can be fine-tuned freely on private data, which helps when the model needs to learn domain-specific terminology or style (e.g., fine-tuning Llama 4 Maverick on past support transcripts to better handle industry-specific vocabulary).

When you fine-tune a closed model through an API, your custom dataset is sent to the provider. Make sure the data isn’t used to retrain the provider’s base model (typically, it isn’t). Fine-tuning usually creates a separate model instance that’s only accessible to you.

Important: Fine-tuning is complex, resource-intensive, and requires significant expertise to get right. For most domain-specific use cases, follow this recommended approach:

- Start with prompt engineering and context augmentation - Provide relevant domain-specific information directly in the prompt. This is the simplest and fastest approach for most scenarios.

- Move to RAG (Retrieval-Augmented Generation) - If you have a large knowledge base, implement RAG to dynamically retrieve and inject relevant context into prompts. This scales better than stuffing everything into the prompt.

- Consider fine-tuning only as a last resort - Fine-tuning should be reserved for cases where the model fundamentally needs to learn new patterns, terminology, or behavior that can’t be achieved through prompting or RAG. It requires substantial training data (typically thousands of examples), computational resources, ongoing maintenance, and expertise to avoid degrading the model’s general capabilities.

Tool Integration and Function Calling

Many voice agents need the LLM to interface with external systems (booking appointments, fetching account info, etc.). All major models discussed in this guide – including GPT-5.4 family, Claude Sonnet 4.6, Claude Haiku 4.5, Gemini 3 Flash, Grok 4.1 Fast, Kimi K2.6, and open-weight models like Llama 4 Maverick, DeepSeek V4-Flash, and GLM-4.6 – support function calling and structured output generation natively. This means they can reliably output JSON, call predefined functions, and interact with external APIs as part of their standard capabilities.

For voice agent applications, function calling enables the LLM to:

- Query databases for customer information

- Book appointments or update calendars

- Process transactions or check account balances

- Retrieve real-time data (weather, stock prices, etc.)

- Trigger workflows in CRM or ticketing systems

The quality of function calling varies by model. The Berkeley Function Calling Leaderboard (BFCL v3) ranks GLM 4.5 at 76.7% and Qwen3 32B at 75.7% among open models; closed-source leaders include Claude Sonnet 4.6, GPT-5.4, and Gemini 3 Flash. For voice-specific tool reliability, see τ-bench / τ²-bench / τ³-bench (Sierra) and VoiceAgentBench.

Implementation Strategies for AI Voice Agents

Now that we’ve analysed the key performance metrics, costs, and business impact of different LLMs, the next step is to focus on how to effectively implement AI voice agents using these models. Successful deployment requires careful consideration of model selection, performance optimisation, system integration, security, and continuous improvement.

Integrating AI Voice Agents with Business Systems

For AI voice agents to be truly effective, they must seamlessly integrate with existing business systems. This includes customer databases, CRMs, and support ticketing platforms.

- CRM integration allows AI to retrieve customer history and personalize responses, improving engagement;

- ERP and order management systems enable AI to check order status, process refunds, or update customer records in real-time;

- Function calling and API integration let AI trigger automated actions, such as scheduling appointments or fetching account details.

For voice-based AI to interpret user requests correctly and sound natural, it’s critical to choose the right Speech-to-Text (STT) and Text-to-Speech (TTS) solutions. See our comprehensive STT and TTS selection guide comparing 13 providers with latency benchmarks, accuracy metrics, and cost analysis.

Measuring Success and Continuous Improvement

AI voice agents require ongoing optimisation to maintain high-quality interactions. Businesses should track key performance indicators (KPIs) to evaluate effectiveness:

- Accuracy and coherence - how well the AI understands and responds to inquiries;

- Response time - measuring delays between user input and AI-generated responses;

- Customer satisfaction - evaluating feedback to determine if users find AI interactions helpful;

- First-call resolution rate - analyzing how many queries are resolved without escalation to human agents.

To improve performance over time, businesses should continuously monitor AI-generated interactions, analyse customer feedback, and refine AI responses. This might involve updating prompts, fine-tuning models, or introducing new automation workflows based on observed usage patterns. For comprehensive guidance on monitoring and debugging AI agents in production, including tracing, evaluation frameworks, and tool comparisons, see our observability guide. For voice-specific testing methodologies and quality metrics, see our voice agent testing guide.

Key Considerations for a Successful AI Voice Agent Deployment

By following these strategies, businesses can ensure their AI deployments are both scalable and cost-effective while maintaining a high standard of user experience.

- Align model selection with business needs - fast models for simple tasks, highly accurate models for complex interactions;

- Optimize token usage - use only the necessary context to control costs and speed up responses;

- Ensure seamless system integration - connect AI voice agents with internal databases, CRMs, and APIs to enable automated workflows;

- Prioritize security and compliance - ensure that sensitive customer data is handled according to regulatory requirements;

- Monitor, measure, and refine AI performance - use real-time analytics and customer feedback to improve AI interactions over time.

Use our AI voice agent calculator to model technology, throughput, and expenses based on your chosen LLM and deployment strategy.

Your AI Voice Agent Roadmap

If you’re considering AI voice agents but aren’t sure how to begin, you’re not alone. The key to a successful implementation is starting small, testing results, and scaling efficiently.

Follow this simple roadmap to guide your business through the AI voice agent implementation process:

- Define your use case: Identify where AI can add the most value (customer support, sales, finance, etc.).

- Choose the right LLM: Match your needs with models that balance speed, accuracy, and cost.

- Integrate with your systems: Connect AI with your CRM, ticketing platform, or database for seamless automation.

- Optimize for performance: Reduce latency, improve accuracy, and track performance metrics.

- Test and scale: Start with a pilot, refine your approach, and expand AI adoption based on real results.

Which LLM Should You Choose for Your AI Voice Agent in 2026?

Whether you prioritize real-time responsiveness, enterprise-grade accuracy, or cost-effective self-hosting, the right choice depends on your specific business needs. Let’s summarize the best models for different use cases and help you make an informed decision.

| Model | Best For | Cost Efficiency | Ideal Use Cases |

|---|---|---|---|

| Gemini 3.1 Flash-Lite | Cheapest Tier-1; balance of speed and accuracy | Very High | Omnichannel customer service, technical support, real-time routing |

| Grok 4.1 Fast | Very large context (2M) with the best price in tier | Very High | Long customer calls, legal review, contract analysis, sales transcripts |

| GPT-5.4 nano | Cheapest OpenAI model for sub-second voice | Very High | Sub-agents, classification, latency-sensitive routing |

| Claude Haiku 4.5 | Fast with good accuracy at affordable price | High | High-volume production, professional services, customer support |

| GPT-5.4 (flagship) | Strong reasoning + tool use, 1M context | Moderate | Mixed workloads, enterprise applications, complex queries (non-voice steps) |

| Gemini 3 Flash | Reasoning toggle; voice in non-reasoning mode | High | Production voice with selective deep reasoning on offline steps |

| Claude Sonnet 4.6 | Premium reasoning for complex AI agents | Low | Complex enterprise workflows, advanced reasoning, regulated industries |

| Claude Opus 4.7 | Highest accuracy on hardest tasks | Lowest | Offline agent steps only (TTFT 134s+); planning, post-call summarization |

| Kimi K2.6 | Strong agentic capabilities with good value | High | Interactive chat, complex workflows, agentic applications |

| DeepSeek V4-Flash | Very low cost with cache-hit pricing | Very High | High-volume applications, experimentation, cost-sensitive deployments |

| Llama 4 Maverick / GLM-4.6 | Open-weight, self-hosting for maximum privacy | Very High (self-hosted) | Finance, healthcare, government, or privacy-first enterprises |

LLM choice determines how your voice agent thinks and responds. The complete picture includes platform selection, STT/TTS configuration, observability, compliance frameworks, cost management, and scaling infrastructure.

About Softcery: We’re the AI engineering team that founders call when other teams say “it’s impossible” or “it’ll take 6+ months.” We specialize in building advanced AI systems that actually work in production, handle real customer complexity, and scale with your business. We work with B2B SaaS founders in marketing automation, legal tech, and e-commerce—solving the gap between prototypes that work in demos and systems that work at scale. Get in touch.

Frequently Asked Questions

The best LLM depends on priorities – speed, accuracy, or cost. For real-time, high-volume interactions, low-latency models like Grok 4.1 Fast, Claude Haiku 4.5, GPT-5.4 nano (minimal effort), or Gemini 3.1 Flash-Lite Preview work well. For accuracy and reasoning, Claude Sonnet 4.6, GPT-5.4, or Gemini 3 Flash (in non-reasoning mode for the voice turn) are stronger choices. Open-weight options like Llama 4 Maverick, DeepSeek V4-Flash, Kimi K2.6, and GLM-4.6 fit teams that need full data control or self-hosting. Critical voice constraint: avoid high reasoning effort for the user-facing turn – it adds seconds to minutes of TTFT depending on model.

The key latency metrics are TTFT (Time to First Token), which measures how fast a model starts responding, and TPS (Tokens per Second), which measures how quickly it generates output. For natural, real-time conversations, end-to-end latency under 1 second is ideal, which leaves roughly a 400ms LLM TTFT budget after VAD, STT, TTS, and network overhead. Accuracy benchmarks such as MMLU-Pro, GPQA, BFCL v3 (function calling), τ-bench / τ²-bench / τ³-bench (Sierra tool-agent benchmarks with voice support), and VoiceAgentBench help compare reasoning, instruction-following, and tool-use ability across models. For voice agents specifically, function-calling reliability matters as much as raw reasoning quality.

It depends on usage volume and GPU utilization. For smaller or moderate workloads, managed APIs (OpenAI, Anthropic, Google, AWS Bedrock) are usually cheaper and easier since they scale automatically and require no infrastructure management. At large scale with sustained GPU utilization, self-hosting open-weight models (Llama 4 Maverick, DeepSeek V4-Flash, Kimi K2.6, GLM-4.6) can lower long-term costs. April 2026 GPU rentals: H100 $1.49–$2.99/hr on specialist providers (Lambda, RunPod, CoreWeave), B200 $2.65–$3.79/hr. Self-hosting breaks even only when GPUs stay busy – idle time flips the math back toward API pricing.

Major providers like OpenAI, Anthropic, and Google state that API data isn’t used to train their base models. Fine-tuning usually creates a separate, private model instance for the organization. OpenAI offers a “zero data retention” mode for enterprises. Microsoft Azure OpenAI signs BAAs for HIPAA. AWS Bedrock provides VPC integration and data residency for Claude, Llama, Nova, and others. For stricter compliance or data sovereignty needs, self-hosting open-weight models (Llama 4 Maverick, DeepSeek V4-Flash, Kimi K2.6, GLM-4.6, Qwen 3.5) ensures all data stays within owned infrastructure.

You can use our AI voice agent calculator to estimate monthly expenses. It factors in model type, token usage, and deployment strategy (API vs. self-hosted) so you can forecast both performance and operational costs before launching your system.